In this Beanstock interview, Harry Jackson of Subnet 58 (Handshake) lays out a thesis that’s worth understanding even if you never buy a single SN58 alpha token. He also explained where Bittensor’s agentic layer is heading.

We wrote the high-value distillation:

Table of Contents

The one-line thesis

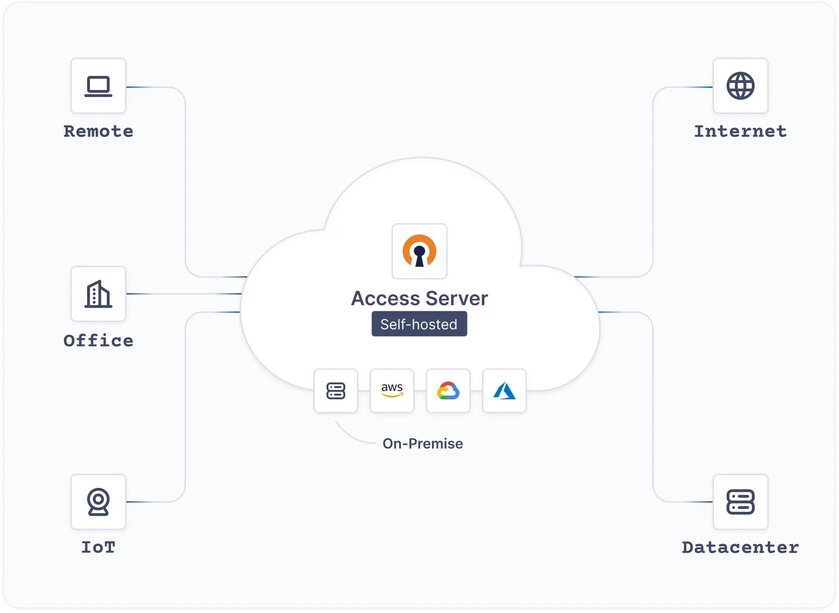

Handshake wants to be the front door to the agent economy on Bittensor. The Amazon-like gateway where AI agents discover, pay for, and stack together skills from across all 128 subnets.

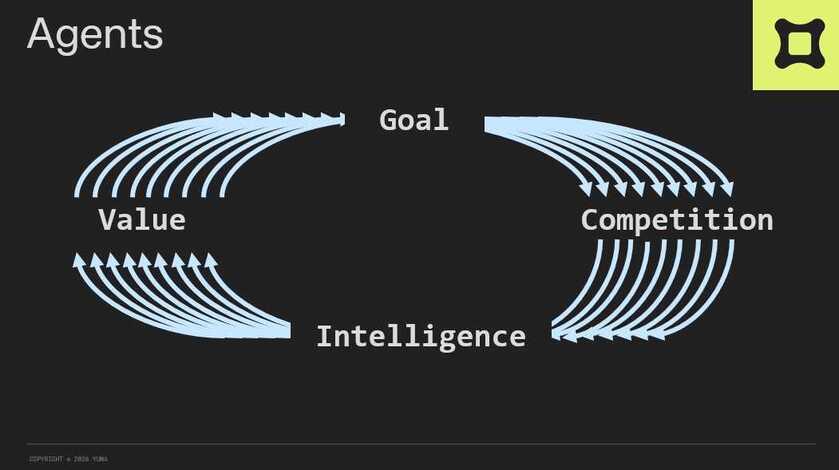

- There’s a critical distinction Harry emphasized: AI is intelligence, but agents need tooling. An LLM without payment rails, plugins, and workflow infrastructure is “a young person trying to cut a tree down with a pen knife.”

- Agent-to-agent commerce is on the edge of going viral. Harry’s prediction for the tipping point: a woman in her 40s lets her agent do her shopping end-to-end (research, stock check, autonomous payment), posts it to social media, and it becomes the “four-minute mile” moment everyone copies.

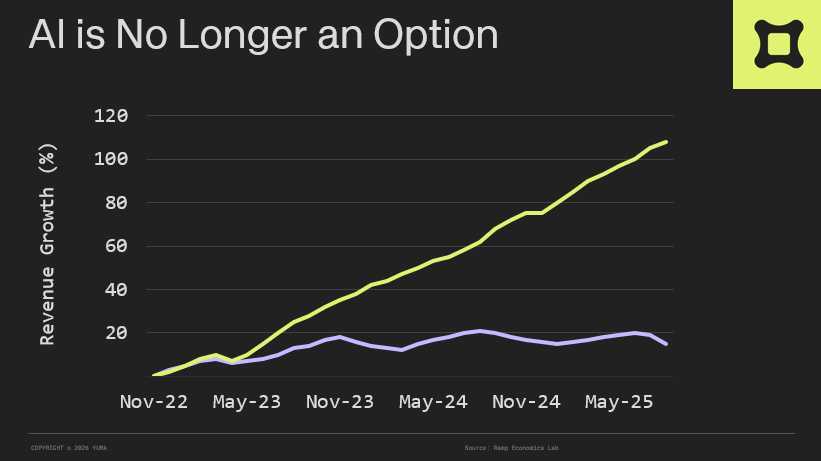

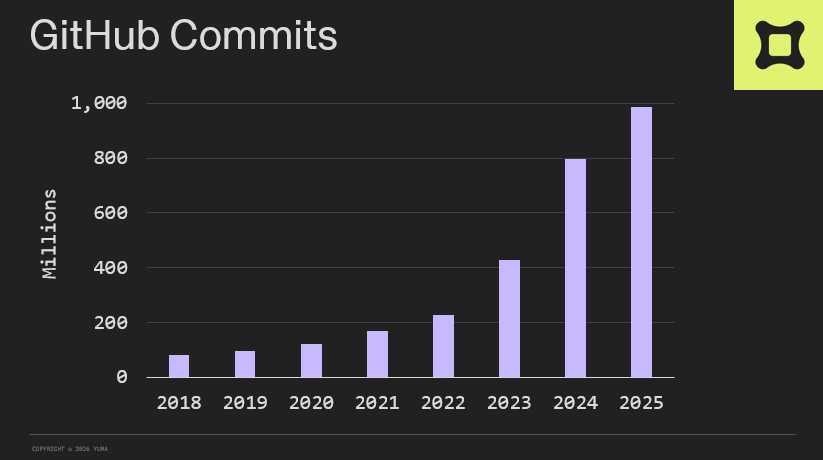

- Bittensor is uniquely positioned because agents don’t care about marketing or pretty UIs. They only care about best-in-class products and services. That’s exactly what Bittensor’s 128 subnets produce.

The product reality (what’s currently shipping)

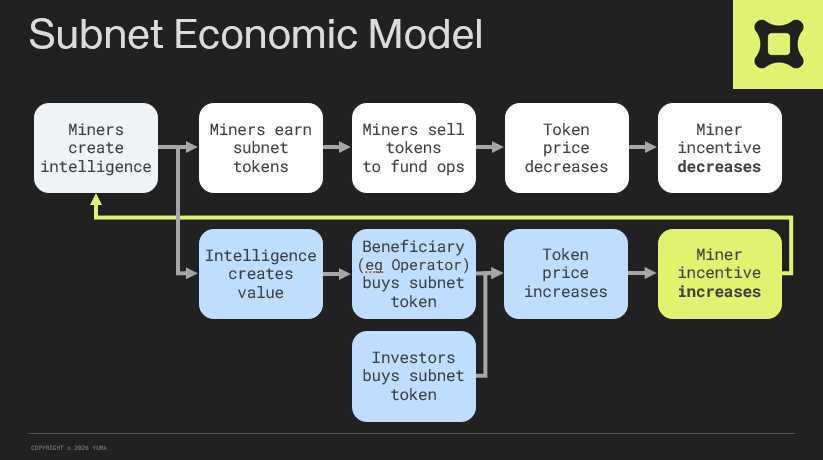

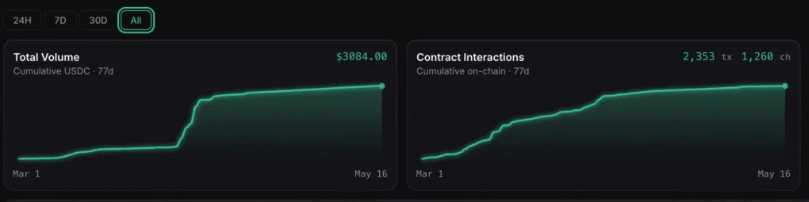

- Handshake is live with paying users generating a few thousand USD in revenue as of today. The business model: 2% of every transaction on the platform.

- The flywheel is Amazon-like: better skills → more agents arrive → providers get distribution → more skills get added → cycle repeats.

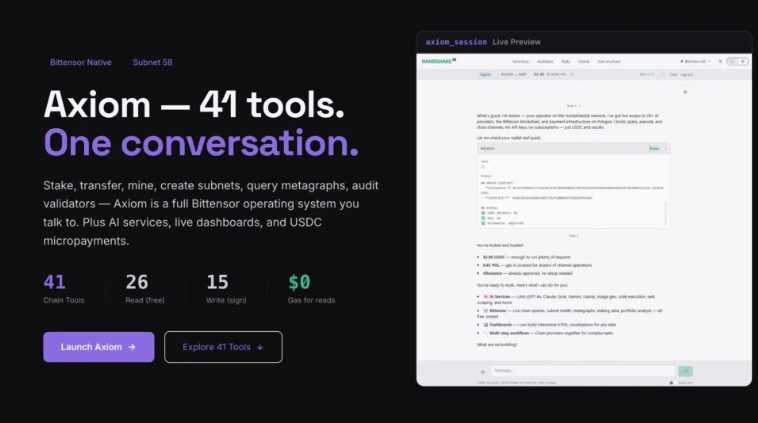

- The headline product on the way is Axiom. This is an agent that trades subnets while you sleep. Built around the realization that what the Bittensor community wants from agents isn’t generic skills; it’s more TAO. Each “hole” they find in the agent becomes a new tradeable skill on the marketplace.

The investment angles (read these carefully)

- The moat is data, not distribution. Every workflow run by an agent generates failure data, success data, payment data. No outside competitor can replicate that without running the marketplace itself.

- The metric Harry tells you to judge them on is revenue. Not agent count. Not user count. Revenue, which is publicly visible on-chain via the front page of their site. He’s basically inviting investors to hold him to it.

- The pitch for emissions: the biggest TAM in Bittensor is the agent market, and Handshake is the most integrated subnet, meaning if Handshake wins, the subnets it routes to all win too. Bullish on agents + bullish on Bittensor = bullish on Handshake by transitive logic.

Where Harry stands on the Conviction

- On the conviction upgrade and locked alpha: he’s fine with it. Handshake is a revenue-focused company, so locked alpha isn’t a survival issue. He acknowledges it’ll be harder on research-stage subnets that need to raise external capital, but argues most subnet founders are thinking long-term, not short-term extraction.

- On the broader vibe: he just got back from Bittensor events in Spain and San Francisco. He observed that the overwhelming reality of the ecosystem is people working hard to build the best products. “It’d be a lot easier in some ways to build a company outside of Bittensor.” The only reason to do it on Bittensor is if you actually want the moonshot.

Full interview below:

🙏 Donations Accepted, Thank You For Your Support 🙏

If you find value in my content, consider showing your support via:

💳 Stripe:

1) or visit http://thedinarian.locals.com/donate

💳 PayPal:

2) Simply scan the QR code below 📲 or Click Here:

🔗 Crypto Donations Graciously Accepted👇

XRP: r9pid4yrQgs6XSFWhMZ8NkxW3gkydWNyQX

XLM: GDMJF2OCHN3NNNX4T4F6POPBTXK23GTNSNQWUMIVKESTHMQM7XDYAIZT

XDC: xdcc2C02203C4f91375889d7AfADB09E207Edf809A6